How to Automate Data Validation between Oracle Cloud ERP and a Data Warehouse

Oracle Cloud ERP is a SaaS product from Oracle covering General Ledger, Accounts Payable, Accounts Receivable, Fixed Assets, Projects and several other important functional areas. It is a very popular platform and a number of companies have used it as the basis for their migration to the Cloud. Like many of today’s SaaS application vendors, Oracle does not allow direct SQL query access against the underlying database tables of Cloud ERP enabling data privacy and to limit the volume of data being extracted to control the load on the ERP system (in other words, you cannot take a tool like Oracle SQL Developer and establish a connection to the Oracle Cloud ERP database and just start writing queries the way you can with on-premises applications like Oracle E Business Suite).

It is very common for companies to use a data warehouse in conjunction with their ERP system. In such a scenario, data is extracted from the ERP system and fed into the data warehouse. Now we all know that validating data once an ETL/ELT process has completed is an essential process to maintain data integrity. However, it becomes harder when we do not have direct access to the underlying ERP database to compare.

So, if there is no direct access to the Oracle Cloud ERP database, how do people validate data?

They follow a three-step process:

- Extract data from source (Oracle Cloud ERP):

As we have no access to the Cloud ERP database, people extract the data in the form of CSV files by creating BIP data models and reports for each dimension/fact or let us say a test case in terms of testing.

- Extract data from target Data Warehouse:

We are aware that current market has a handful of data warehouses, which are on premise as well as cloud-based. Extract the data into CSV files for their respective test cases.

- Compare the data:

Now validate the source and target CSV files w.r.t test cases for any data discrepancies.

Sounds simple!

Really! Think of doing this for some 30+ test cases.

Exhausted! Don’t we have an alternative?

We do, by using the BI Publisher Web Services, Python and Jenkins we can make the above three-step process automated and generate test reports.

How? Let us get into it

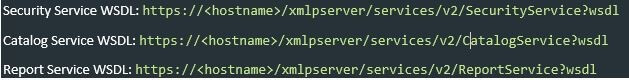

For this we used below BI Publisher, web services

Refer to BI Publisher Web Services for all other services and methods.

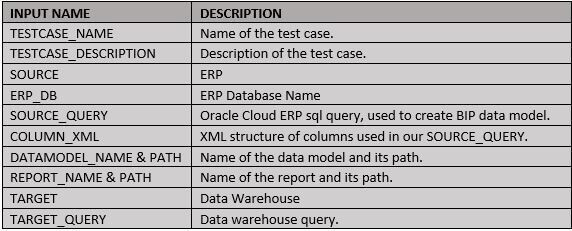

In addition, we need an input CSV/Excel file with below inputs for all test cases and a parameter file with source and target connection details to the program.

I have used Python as I felt it is simpler compared to Java and Jenkins to generate test report and make utilize the Test Results Analyzer.

Below is the hierarchy of steps in our program

- Login to Oracle Cloud ERP

- Create Data Model with the SOURCE_QUERY input

- Create Report with the data model created in step 2

- Run the report and extract the data to CSV file.

- Connect to target Data Warehouse.

- Run the TARGET_QUERY input.

- Extract the results into a CSV file.

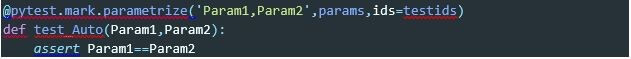

- Validate the results using Pytest module.

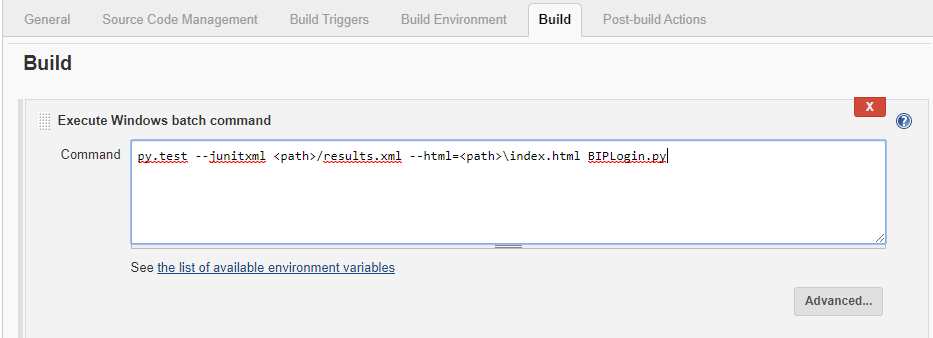

- Run the python script with junitxml and html to generate XML and HTML test reports.

- Create a project in Jenkins and run the python script from Jenkins, use Test Analyzer plugin to analyze your test runs.

Login to Oracle Cloud ERP

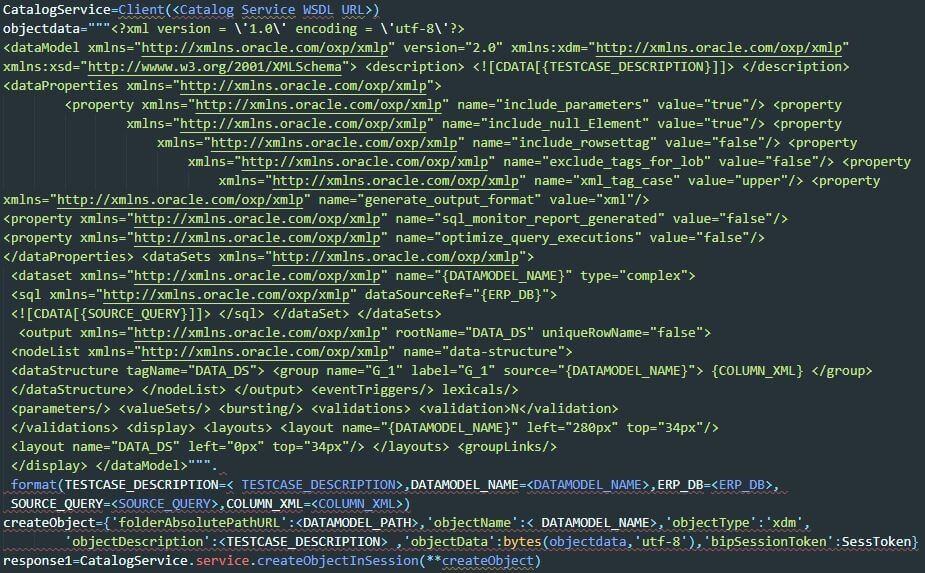

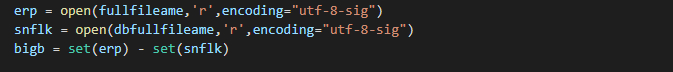

Create BIP Data Model

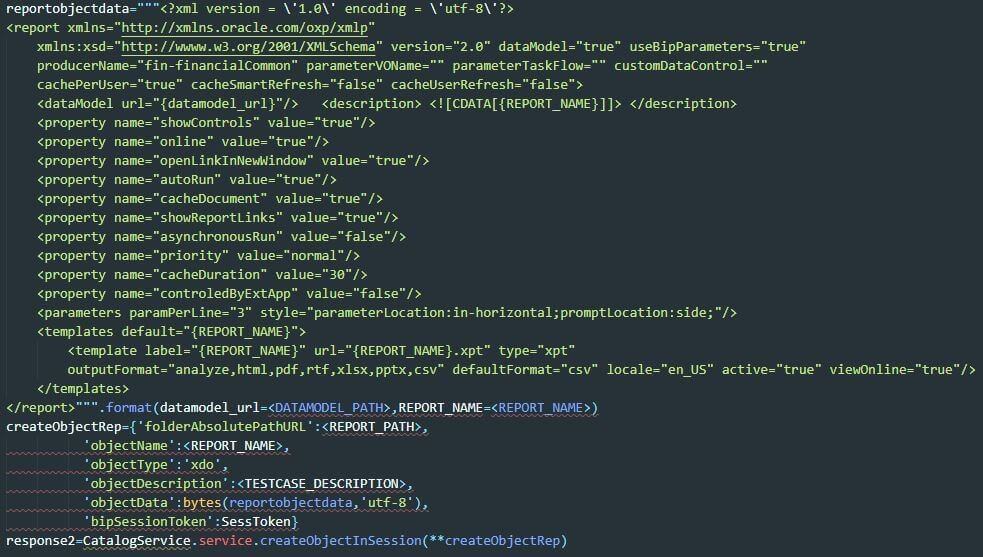

Create BIP Report

Run Report and extract the response to CSV file

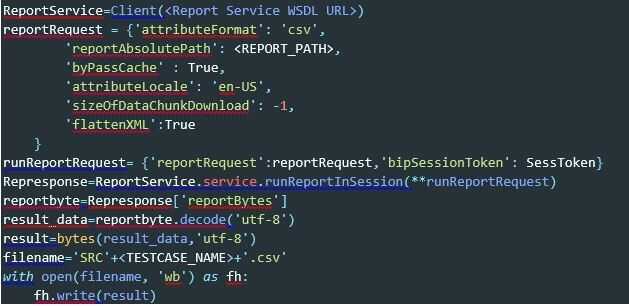

Connect to target data warehouse and extract the data.

Let us do it for SNOWFLAKE, which is a cloud based data warehouse.

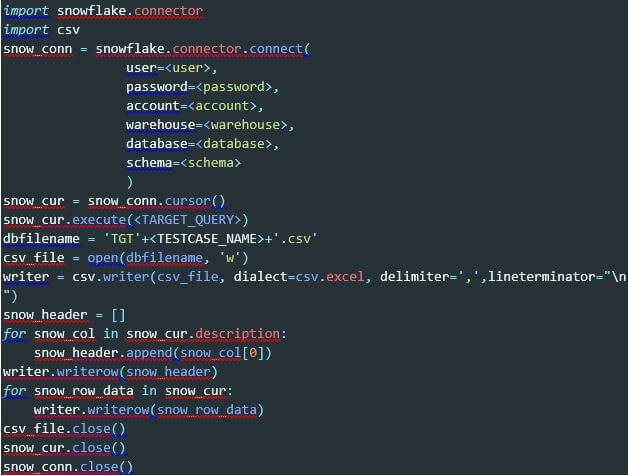

Compare the CSV files for differences

Use Pytest to make a test suite and generate test reports

Create a Jenkins project and run the python script using junitxml and html arguments

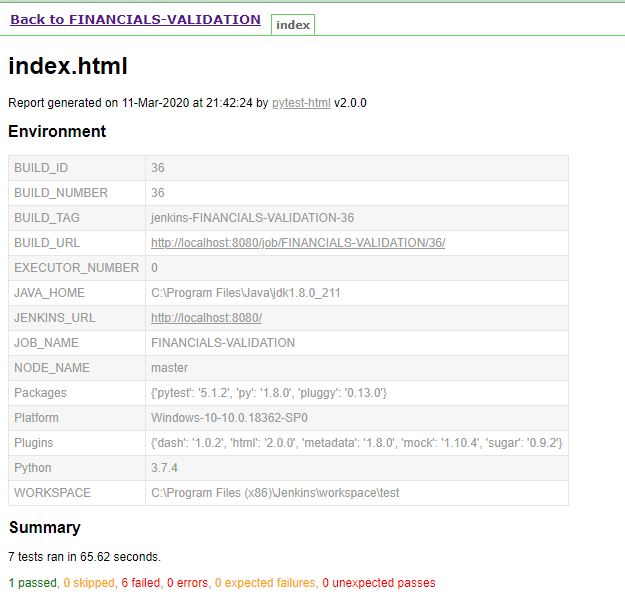

HTML Test report generated for the build in Jenkins

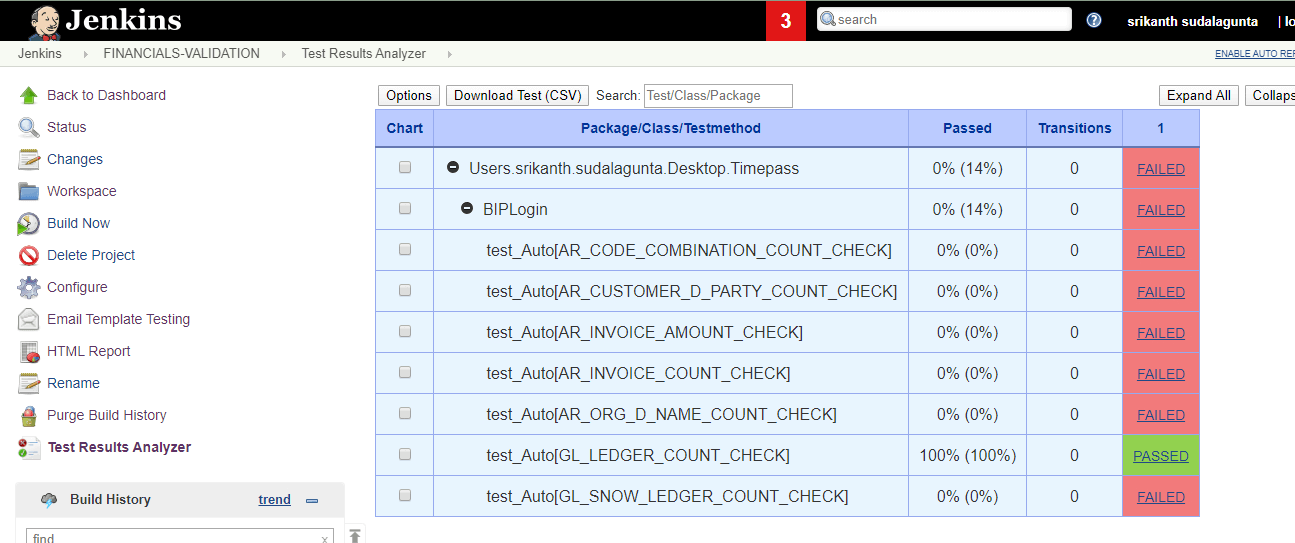

Result analyzer with all test cases and their status

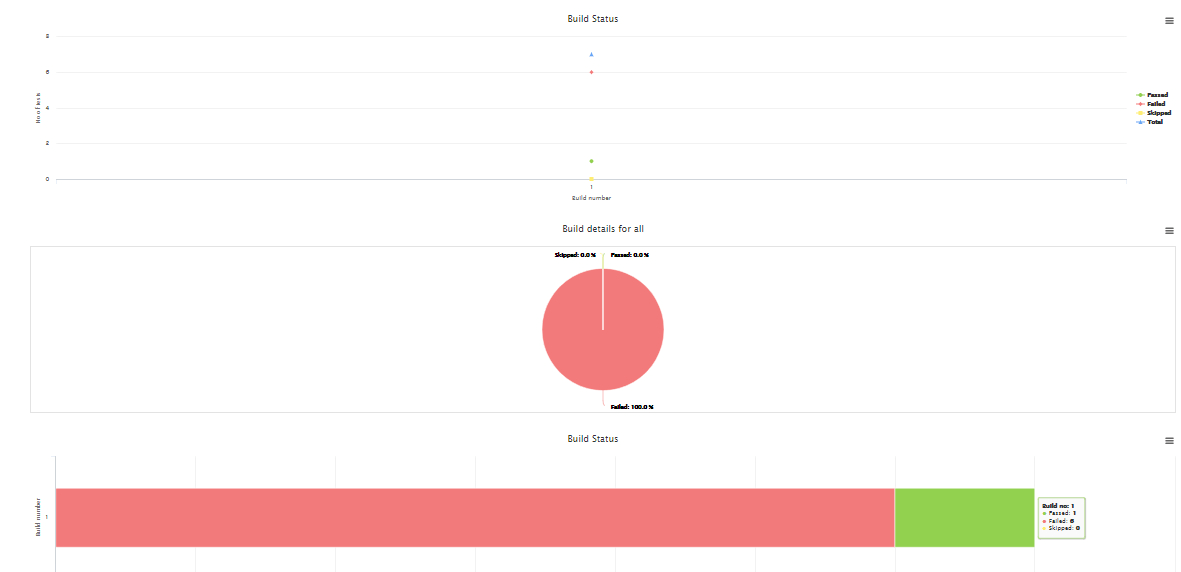

Line, Pie and Bar representation of the test case status in Jenkins

We hope you found this outline and explanation useful and that it helps you more quickly and efficiently validate your data warehouse data against your Oracle Cloud ERP data.

Apps Associates’ Data and Analytics Practice is a team of 125 highly credential professionals dedicated to helping companies leverage their data assets to become data-driven organizations.