IoT-Enabled Analytics with Snowflake on Google Cloud Platform

Introduction

Our Customer is a world leader in mobile robotics, committed to tackling some of the toughest challenges of today that solve for tomorrow and beyond. By combining the principles of dynamic control and balance, with sophisticated mechanical designs, cutting-edge electronics, and next-generation software, company’s robots are able to automate tasks in difficult, dangerous, or unstructured environments. As the company has increasingly focused on commercializing its technology, company knew that its IT infrastructure also needed to grow and expand. The Customer turned to Apps Associates – a Snowflake Select partner with over 20 years of business experience – to help design, build and implement a Snowflake-based Internet of Things (IoT) analytics solution.

Use Case

The Customer needed a scalable analytics solution that better supported its robots in the field – with stronger data capture and analysis – enabling it to meet long-term company goals that now include commercial sales, product reliability and product improvement at scale.

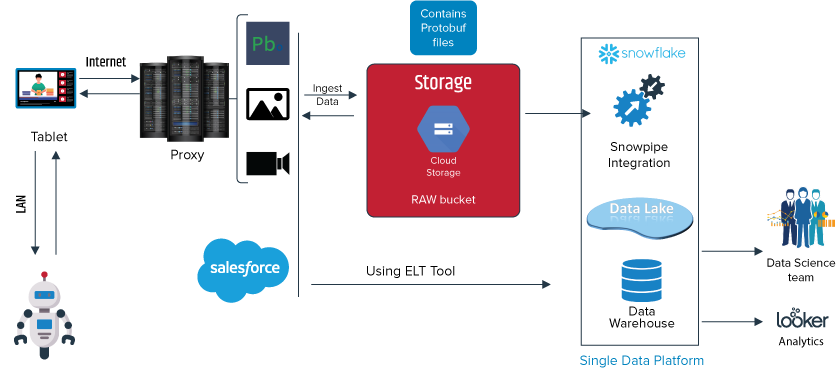

The Customer wanted to have a single data platform which can scale at large and have the capabilities to expand as a Data Lake for future needs. The benefits of data lakes and the advantages of data warehousing along with the availability on major cloud providers made Snowflake a preferred option.

Implementation

The Customer’s robot is an agile mobile robot that navigates terrain with unprecedented mobility, allowing Customers to automate routine inspection tasks and capture data safely, accurately, and frequently.

The Enterprise Data Warehouse Solution was built by sourcing data from IoT devices, that send data securely via the Cloud using MQTT protocol. The data is generated and captured by events and processed using GCP Cloud Functions that store the raw data in the Snowflake Data Lake. The raw data is transformed and combined with the Salesforce information in the Snowflake Enterprise Data Warehouse. The combined data is analyzed for the Robot’s performance through visualizations built using Looker.

IoT (Robot as the Source):

Every day the IoT device takes a snapshot of all its current data and stores it onto a tablet (that is used to communicate with the IoT Device). Each snapshot has a specific ID (sequence number) generated at the time of creation. The Snapshot ID along with the IoT device Serial Number makes the data unique across multiple devices. IoT data from the devices is uploaded at different time intervals from different customers onto the GCP Platform.

Data in Protobuf format captured in the tablet is uploaded to the server at a predefined frequency. Data from the server is then transferred to Google Cloud Storage for temporary staging before it is ingested into Snowflake. Similarly, customer specific information is also extracted from the Salesforce application and ingested into Snowflake as shown below.

Google Cloud Platform (GCP): Streaming IoT data

When the IoT device through the tablet is connected to the Internet, it immediately sends a message to the Proxy server in GCP to identify the last communication from the specific tablet identified by the IoT Serial Number. Once the identification is completed, the Proxy server sends an acknowledgment with the latest Snapshot ID value it contains to the tablet.

Upon receiving the snapshot ID information, the tablet starts to communicate with the Proxy server and using Google Pub/Sub uploads the snapshots to Google Cloud Storage. After all the files are uploaded, proxy server stores the latest snapshot ID against the IoT Device Serial Number and also sends the same acknowledgment to the IoT device.

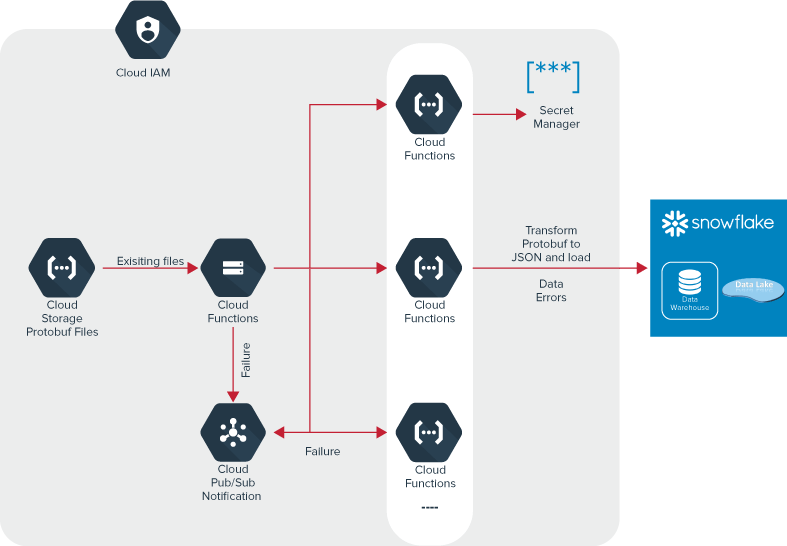

An event is triggered when a file is uploaded into Google Cloud Storage. Using Cloud Storage as the trigger type on the specific bucket, a Cloud Function gets called. The following steps are accomplished within the Cloud Function using a Python script

- Credentials from Secret Manager are retrieved and a connection to Snowflake Data lake is established.

- The IoT device Snapshot file is parsed and validated for errors before ingestion

- The snapshot file is converted into JSON format after validation

- If the file has errors, then a Pub/Sub is invoked and an email is sent to relevant teams for action

- Data is Ingested into Snowflake Data lake

- All connections are closed

Snowflake: Data Loads and Transformations

Data is ingested into Snowflake in JSON format using the VARIANT data type. VARIANT is a semi-structured data type that can store values of any other type, including OBJECT or ARRAY.

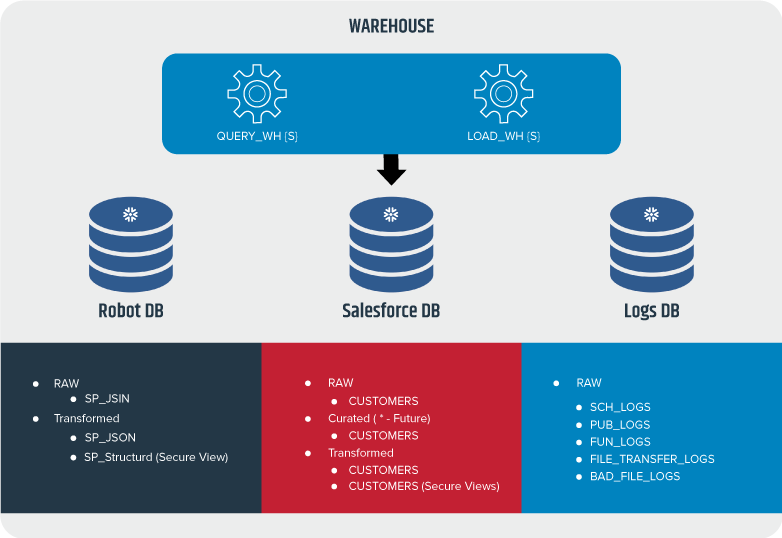

Multiple schemas were created in the Snowflake Data Warehouse to differentiate the data transformation stages for different sources.

- RAW – Raw layer contains data in its most basic format ingested from the source application. Except the Database Administrator, no other user has access to this layer

- Curated – Cleansing activities like data type mismatches, bad data corrections, date / time format alignment are done in this layer. Most super user roles have access to this schema

- Transformed – Data is transformed and aggregated to fulfill business requirements. Data security and masking policies are implemented, roles are created to grant users access to IoT data.

Separate databases were created within the Snowflake Data Warehouse for each source application to easily manage data and associated security needs. One database for IoT data, one for Salesforce enterprise application information and a warehouse database that holds combined enterprise transformed data for end-user reporting needs were built.

Once the JSON data is loaded into RAW Schema, Snowflake in-built streams is used to move the flattened structured data to the curated layer. Salesforce data that is extracted to the GCP storage bucket is loaded into Snowflake using Streams and Tasks. Secure views to mask specific PII data points are created to control data that can be visible across different departments.

Looker: Data Visualization

Security of the above schemas is managed using different roles. A specific shared role is granted to a Looker user that is used to build different visualizations.

Summary

The organization has been able to continuously monitor the IoT devices over a period of time and analyze performance of the Robots by customer, industry, and software versions. Testing log data that has been integrated in the data warehouse also provides an insight into the issues and resolutions associated with the Robots. Future updates to the robots can be better planned based on analyzed customer usage.

Visit our Data and Analytics webpage to learn more. Download our case study here.